Scope definition is the single variable that explains why identical web applications with the same tech stack, same team size, and same hourly rate ship in 3 months with one client and 6 months with another. PMI’s agile planning research backs this up with an uncomfortable statistic: between 64% and 80% of features shipped in software products are rarely or never used after launch. Each of those features consumed sprint cycles, QA hours, and project management bandwidth. When you’re paying $25–$40/hour per developer on an offshore team, the cost of building the wrong thing compounds fast. The mechanism that prevents this waste and separates a 12-week project from a 24-week one is rigorous web app development scope definition before a single line of code gets written.

How Agile Inverts the Triple Constraint

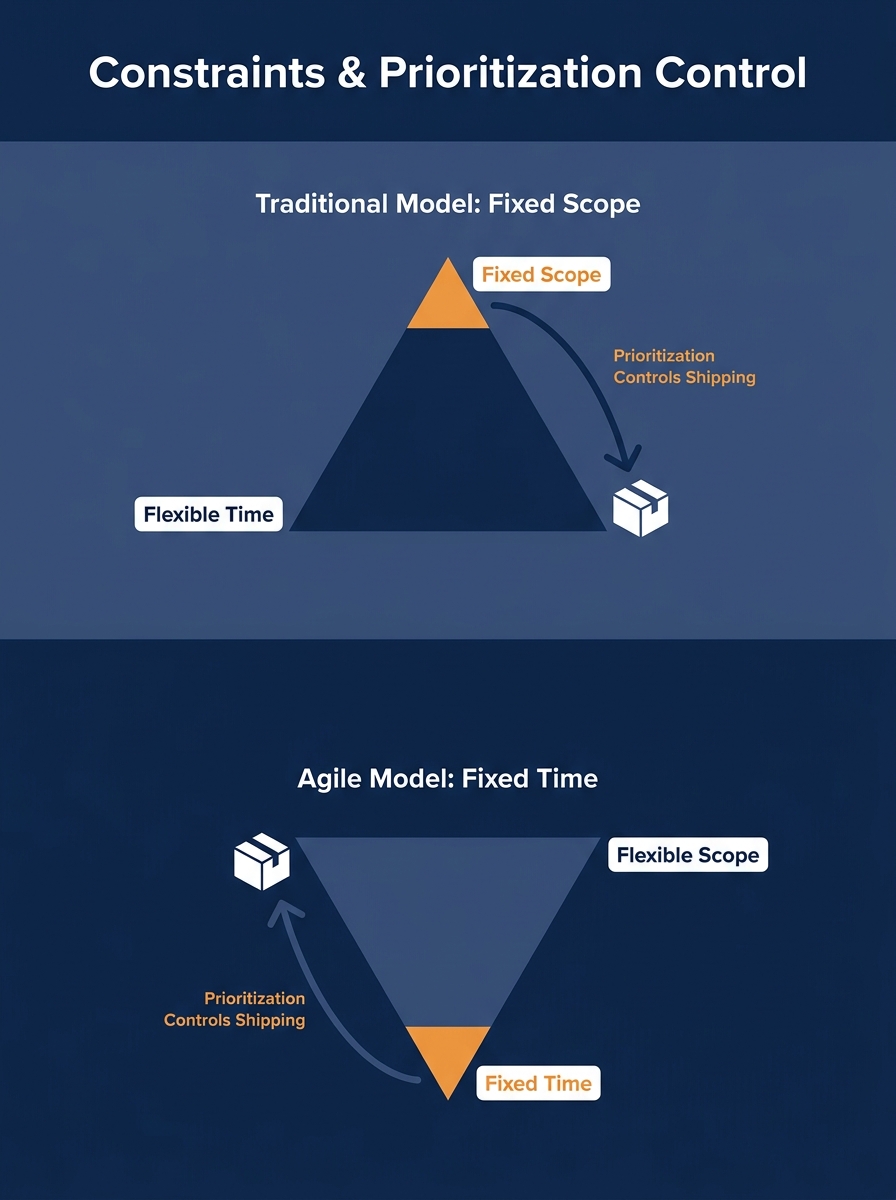

Traditional project management treats scope, time, and cost as three linked constraints. You fix two and the third floats. In waterfall, the usual approach was to lock scope first, fix the budget, and let the timeline stretch until everything got built.

Agile flips this. Time gets boxed into fixed-length sprints, typically two weeks. Resources stay relatively stable because you’re working with the same offshore team sprint over sprint. Scope becomes the flexible variable, the thing you deliberately expand, contract, or reorder based on what you learn each cycle.

This inversion matters because it changes how the agile development timeline works in practice. Instead of asking “how long will it take to build everything we want?”, you ask “what’s the most valuable set of features we can ship within 12 weeks?” That question forces prioritization. And prioritization is where scope definition lives.

A team that treats everything as priority-one will build a 6-month project. A team that ruthlessly separates must-have from nice-to-have will build a 3-month one. The code quality, the talent pool, the technology choices all matter, but they’re secondary to this structural decision about what gets built and in what order.

What a Scope Document Actually Pins Down

A scope document isn’t a feature list. That’s the misunderstanding that costs teams months. A feature list tells you what to build. A scope document tells you what to build, what to explicitly exclude, how to handle changes, and what “done” looks like for each deliverable.

As WalkingTree Technologies describes the process, the first step is planning and strategizing, followed by goal definition, task subdivision, and timeline estimation. Development comes third. Skipping or compressing those first two stages is how projects balloon from 12 weeks to 24.

A functional scope document for offshore project planning includes:

- Core user stories ranked by business value, with acceptance criteria attached to each one

- An explicit out-of-scope list that names features you’ve discussed and deliberately deferred

- A change management protocol defining who can approve scope additions and what impact assessment is required before anything new enters a sprint

- Technical constraints and assumptions covering target platforms, browser support, third-party integrations, and performance benchmarks

- Definition of done for each milestone, not only for the final deliverable

That last point is where many outsourced projects go sideways. If “done” means “feature works in the dev environment” to your offshore team but “feature works in production with monitoring and error handling” to you, you’ve got a 2-3 week gap hiding in every single milestone. Multiply that across 8-10 features and you’ve found your missing three months.

This is exactly why preventing communication breakdowns early in an outsourced engagement has direct timeline consequences. The scope document creates what QuestSys calls a common language between business leaders, developers, and end users, turning high-level ideas into specific commitments that reduce the likelihood of misunderstandings.

The Prototype Gate

Prototype-first outsourcing compresses the most expensive part of any project: the discovery of what you actually need.

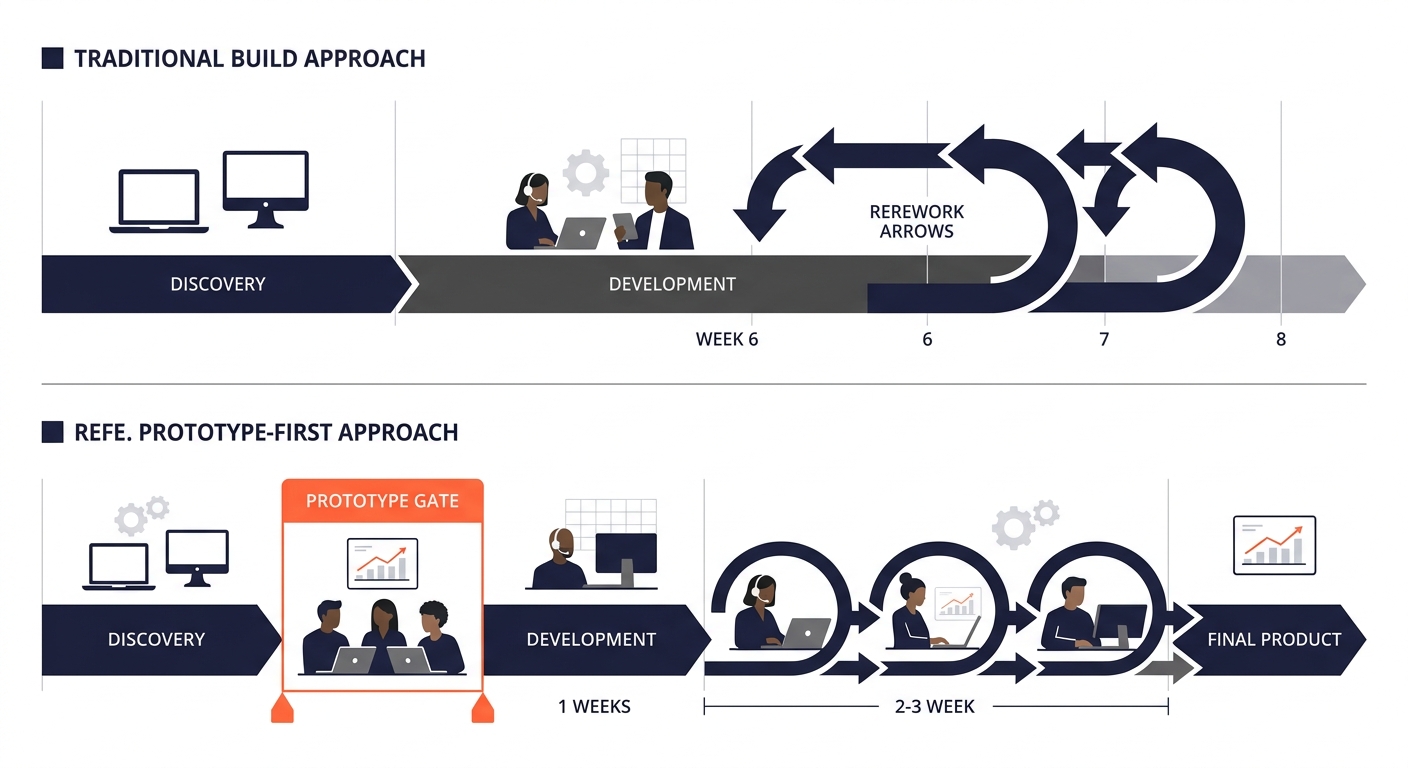

The traditional approach runs like this: write requirements, send them to the dev team, wait 4-6 weeks for a working build, discover that what you described isn’t what you meant, revise, rebuild. That cycle eats 6-8 weeks on its own, and it often repeats.

A prototype gate short-circuits this by producing a clickable, testable representation of the application within the first 2-3 weeks. This isn’t a wireframe deck or a static mockup. It’s an interactive prototype that lets stakeholders click through actual workflows, spot missing steps, and identify UX problems before any backend logic exists.

The economics are straightforward. An interactive prototype takes one designer and one frontend developer roughly 80-120 hours to build. At Philippine rates, that’s $2,000-$4,800. The alternative, building production code against assumptions that turn out to be wrong, costs $15,000-$30,000 in rework on a typical custom web app.

When your team runs stakeholder workshops during the prototype phase, you compress weeks of async back-and-forth into focused working sessions. The timezone overlap between Philippine teams and Australian clients (2-3 hours of shared business hours) or US West Coast clients (brief morning overlap with Manila’s afternoon) makes these sessions feasible if you schedule them deliberately.

The prototype gate turns arguments about requirements into demonstrations. You stop debating what a feature should do and start pointing at a screen.

Scope Creep Adds Months, Not Weeks

Research consistently shows that 33-37% of projects experience scope creep. That number undersells the problem because it treats all scope changes equally. Adding a “forgot to mention” login feature in week 2 is a different animal than adding a reporting dashboard in week 10.

The mechanism works like this: every feature added after sprint planning triggers a cascade. The new feature needs its own user stories, its own acceptance criteria, its own QA test cases. It may conflict with architectural decisions already made. It definitely displaces planned work from the current sprint into the next one, which displaces that sprint’s work into the one after, and so on.

A well-managed offshore project planning process handles this through a formal change request system. The request goes to the project manager, who assesses the impact on timeline and budget, documents the tradeoff (this feature gets added, these two get deferred), and requires stakeholder sign-off before the change enters a sprint.

Without this system, scope changes arrive as Slack messages. “Hey, can we also add X?” The developer says yes because they want to be helpful. Nobody tracks the cumulative impact. Four “small” additions later, you’re two months behind.

Warning: A 10-15% contingency budget sounds like padding. It’s actually the cheapest insurance against mid-project scope additions that would otherwise push your launch date by 4-6 weeks.

This is where the hybrid model of keeping strategy in-house while outsourcing execution pays off. Your in-house strategist owns the scope document and serves as the gatekeeper for changes. The offshore team builds what’s been approved, without the ambiguity of trying to interpret whether a stakeholder’s casual suggestion is a real requirement.

Bottom-Up Estimation vs. the Gut-Feel Calendar

The three primary methods for estimating software development costs and timelines are parametric, analogous, and bottom-up estimation. The method you choose directly affects whether your 3-month target is realistic or fantasy.

Analogous estimation looks at similar past projects and extrapolates. “Our last e-commerce build took 14 weeks, so this one will probably take about the same.” It’s fast but imprecise, especially when the new project differs in ways that aren’t immediately obvious, like a different payment gateway integration or a more complex user permissions model.

Parametric estimation applies a formula: X story points per sprint, Y sprints per month, Z total story points in the backlog. It’s more reliable but depends on having accurate story point estimates, which are notoriously hard to get right for novel features the team hasn’t built before.

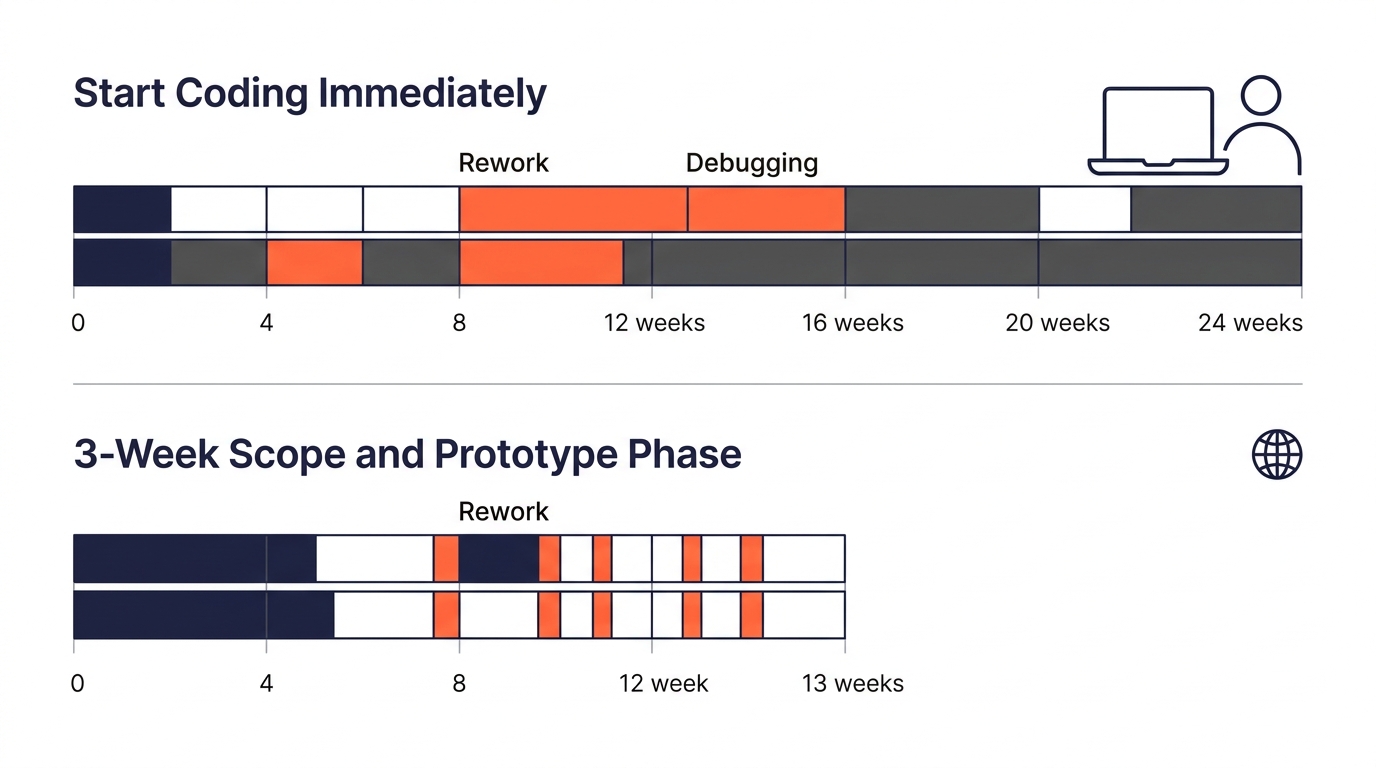

Bottom-up estimation breaks every feature into individual tasks, estimates each task separately, and sums the total. It’s the slowest method and the most accurate. For a 3-month project, the bottom-up approach typically takes 1-2 weeks of the team’s time before development starts. That feels like a lot when you’re eager to start building, but projects that skip this step almost always exceed their original timeline by more than the 1-2 weeks they “saved.”

So here’s the pattern: projects that hit a 3-month delivery window spend 3-4 weeks on scope definition, prototyping, and estimation before writing production code. Projects that try to “save time” by starting development immediately tend to finish in 5-6 months. The investment in upfront planning doesn’t add to the timeline. It compresses it.

When you’re working with an offshore team, structured quality assurance processes built into the scope document prevent the late-stage testing crunch that derails final delivery dates.

Where the Model Breaks

This scope-first mechanism works well for custom web apps with identifiable user workflows: SaaS dashboards, client portals, booking systems, internal tools. It works less well in two specific situations.

The first is genuine product discovery. If you’re building something where the target user’s needs are unknown and the product concept itself is experimental, locking scope early is counterproductive. You need a longer exploratory phase with rapid iteration, and a 6-month timeline might be the honest answer. Trying to force a 3-month window onto a product-discovery project produces an app that ships on time but solves the wrong problem.

The second is integration-heavy projects. When your custom web app needs to connect to 5+ third-party APIs, legacy databases, or enterprise systems with their own release cycles, the timeline becomes partly dependent on external factors you can’t scope away. An API vendor’s documentation might be wrong. A legacy system might not support the data format you assumed. These risks don’t respond to better scope definition because they originate outside your project boundary. Budget an extra 3-4 weeks of buffer for each critical integration you don’t control.

For everything else, the math is consistent. Teams that invest 20-25% of total project duration in scope definition, prototyping, and estimation deliver faster than teams that invest 5% or less. The scope document converts calendar time into shipped features instead of rework cycles. When it’s precise, your 12-week offshore build stays at 12 weeks. When it’s vague, the same team building the same application will take 24 weeks and deliver something nobody’s fully satisfied with.