Uber’s CTO burned through the company’s entire 2026 AI budget before March ended. That detail, reported by Forbes in late April, got buried under the week’s bigger number: Google, Amazon, Microsoft, and Meta collectively spent over $130 billion on AI infrastructure in a single quarter. Alphabet alone has committed $175 billion to $185 billion in capital spending for the full year, with Sundar Pichai confirming that “just over half” goes directly to computing power for AI. The companies have already signaled that 2027 capex will “significantly increase” from there.

If you manage Philippine dev teams building web apps, or coordinate offshore marketing execution for agency clients, these numbers reshape your cost structure in ways the headlines don’t make obvious.

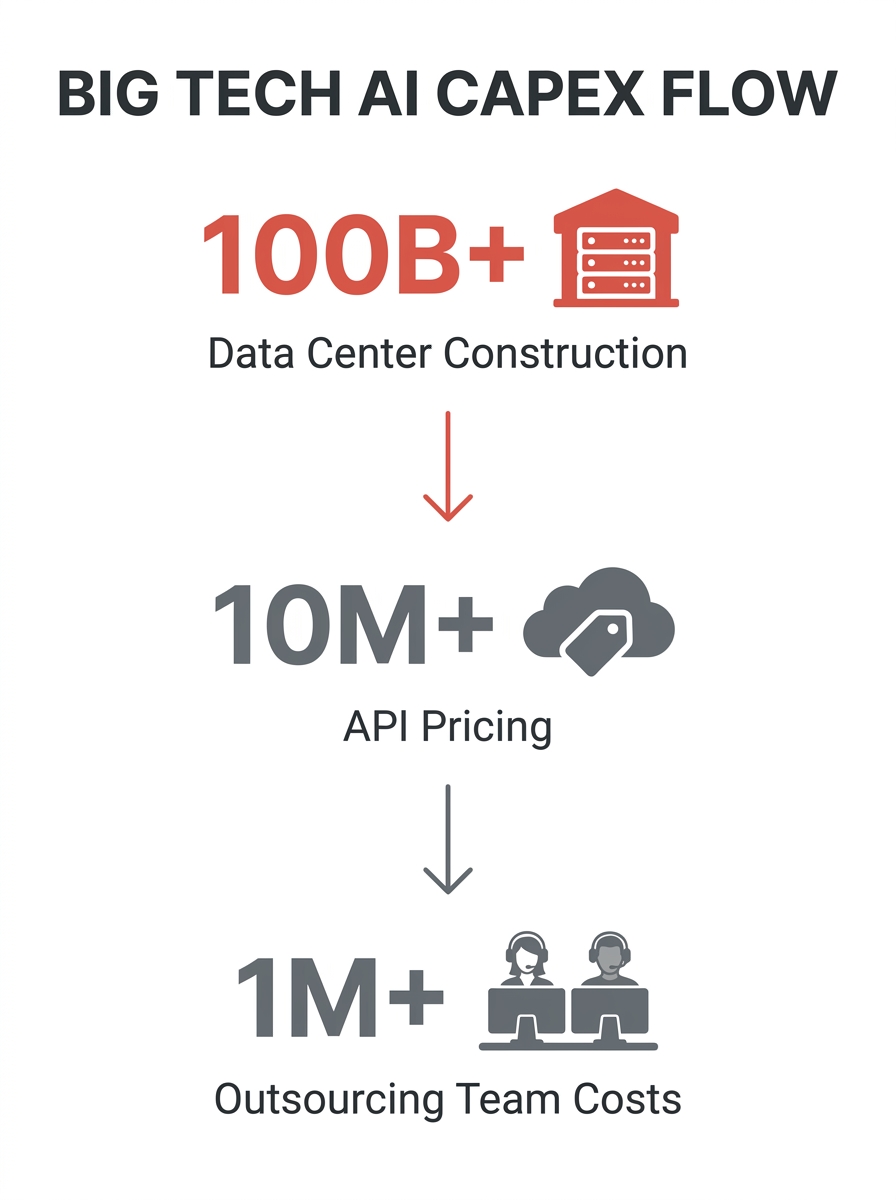

Here’s how that spending wave actually moves through the stack you operate.

The Earnings Week That Rewrote the Math

On April 29, four of the five largest tech companies on earth reported quarterly capital expenditures that, combined, exceeded the GDP of most countries. The New York Times coverage flagged a specific risk: analysts warned that much of this spending is justified by demand from two young companies, OpenAI and Anthropic, who are themselves still burning cash at extraordinary rates. The Motley Fool reported on May 3 that OpenAI missed its revenue and user targets. The New Yorker ran a piece the following day asking the question the entire industry is dancing around: when will any of this actually make money?

For outsourcing operators, the relevant signal here isn’t whether Alphabet’s stock ticked up or down. The infrastructure layer your tools depend on is being built on assumptions that may not hold. Every AI-powered workflow you’ve integrated into your outsourcing stack, from code completion tools your Philippine developers use to the agent-assist systems cutting operational costs by 15% in BPO operations, runs on top of this infrastructure. The companies building it are spending like demand is infinite. Analysts aren’t so sure.

The immediate practical risk is API pricing volatility. In most production-scale enterprises, model spending accounts for roughly 10% to 20% of total AI budgets. When the infrastructure providers are racing to recoup $130 billion quarters, the per-token costs your team relies on today aren’t guaranteed tomorrow.

How $185 Billion in Google Capex Reaches Your Cebu Dev Team

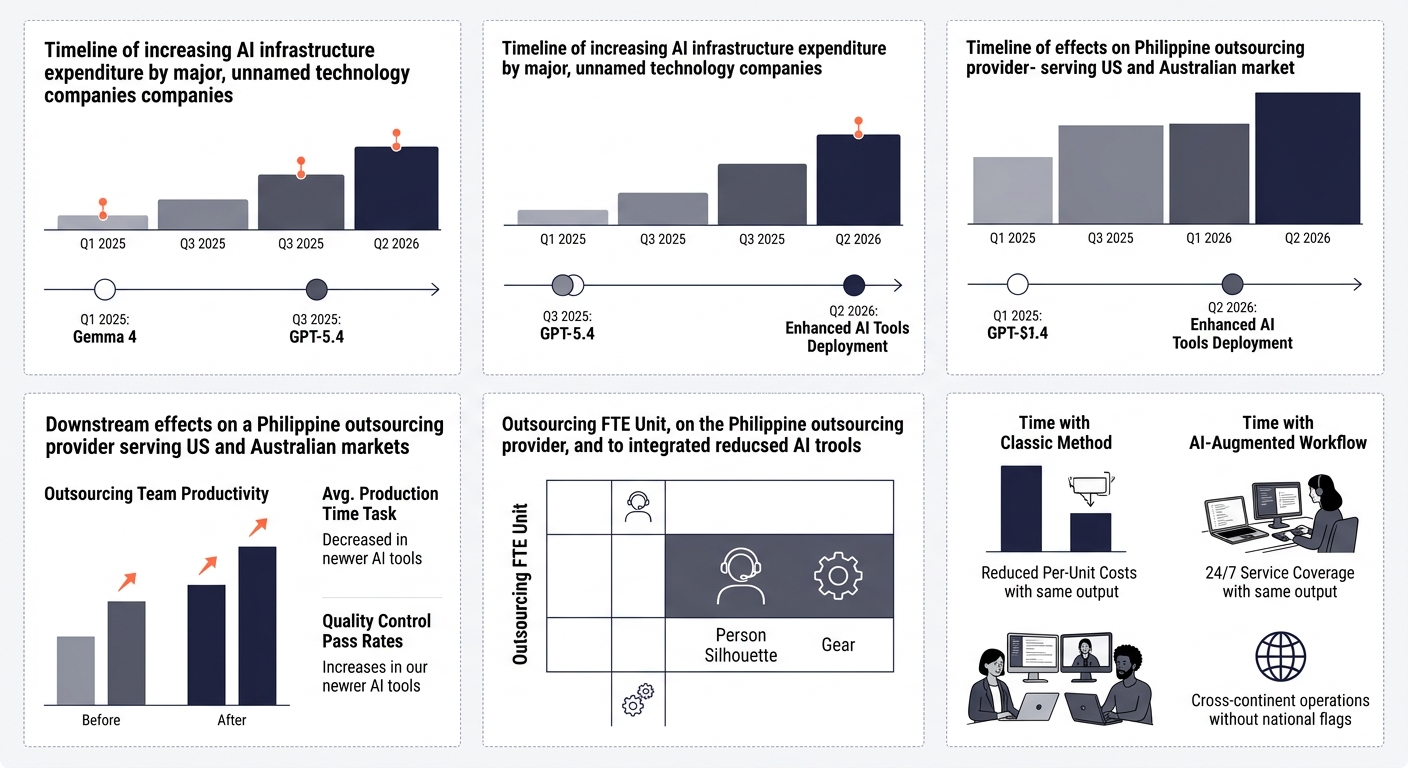

Google commits $175–185 billion in 2026 capex. A significant portion of that builds out data centers and GPU clusters. Those clusters power Gemini 3.1 Pro (which topped reasoning benchmarks at 94.3% on GPQA Diamond when it launched February 19) and Gemini 3.1 Flash-Lite (released in March, optimized for low-cost, high-speed inference). They also power the open-weight Gemma 4, released in April under Apache 2.0 licensing and optimized for agentic workflows.

Here’s where it gets interesting for outsourcing. Philippine development teams have already started building with Gemma 4 specifically because it lets them skip expensive API calls. When your agency builds client projects where per-request API costs eat into margins, running a fine-tuned open model locally changes the unit economics entirely. We’ve covered how Philippine developers use AI-powered architecture to build things US agencies struggle to replicate in-house, and Gemma 4 accelerated that trend considerably.

A fully-loaded senior developer in the Philippines costs $6,000 to $7,500 per month including AI tooling, statutory benefits, and management overhead. The US equivalent runs $180,000 to $210,000 annually. That gap has existed for years. What’s changed is that the Philippine developer now has access to the same AI infrastructure as the American one, sometimes faster, because teams in Manila and Cebu adopted tools like Copilot and Cursor with fewer legacy process constraints to work around.

The AI-augmented outsourcing 2026 picture looks like this: your offshore team uses the same models, the same tooling, and the same deployment infrastructure as a team in San Francisco, at a fraction of the labor cost. The infrastructure spending by Google and OpenAI democratized the tooling. Your outsourcing budget allocation in the AI era should account for this convergence.

The Vendor Lock-in Trap Hiding Inside Model Deprecation Cycles

OpenAI launched GPT-5.4 on March 5 with a 1-million-token context window and autonomous multi-step execution. It scored 75% on the OSWorld-V benchmark, above the human baseline of 72.4%. By March 17, they’d released mini and nano variants for cost-sensitive applications. And then they started deprecating older models: GPT-4o, GPT-4.1, and GPT-5 Instant editions were retired, with GPT-4o fully gone after April 3.

If your outsourcing workflows depend on specific model behaviors, if your Philippine team built QA automation around GPT-4o’s quirks, or your virtual assistants use prompt chains tuned to a particular model version, deprecation means rework. Rework means cost. And OpenAI gave roughly seven weeks between announcing retirement and the hard cutoff.

Warning: Model deprecation timelines are shrinking. If your outsourced teams have workflows pinned to specific model versions, build migration playbooks now, not after the deprecation notice lands.

This is the enterprise AI spending implication that matters most for outsourcing operators: the companies spending $130 billion per quarter also control your model access. They decide when to deprecate. They set the per-token price. They choose which capabilities get reserved for premium tiers.

The emerging response from teams that treat Philippine team AI adoption as a strategic function is dual-sourcing. Run production workloads on commercial APIs where you need reliability and SLAs. Build experimental and client-facing prototypes on open-weight models like Gemma 4 or Llama variants where you control the deployment. This isn’t theoretical. It’s the actual pattern showing up in AI-powered outsourcing operations across Southeast Asia, and it works because open models have reached the quality threshold where “good enough” genuinely is good enough for most client-facing work.

Outsourcing Budget Allocation When Compute Outpaces Headcount

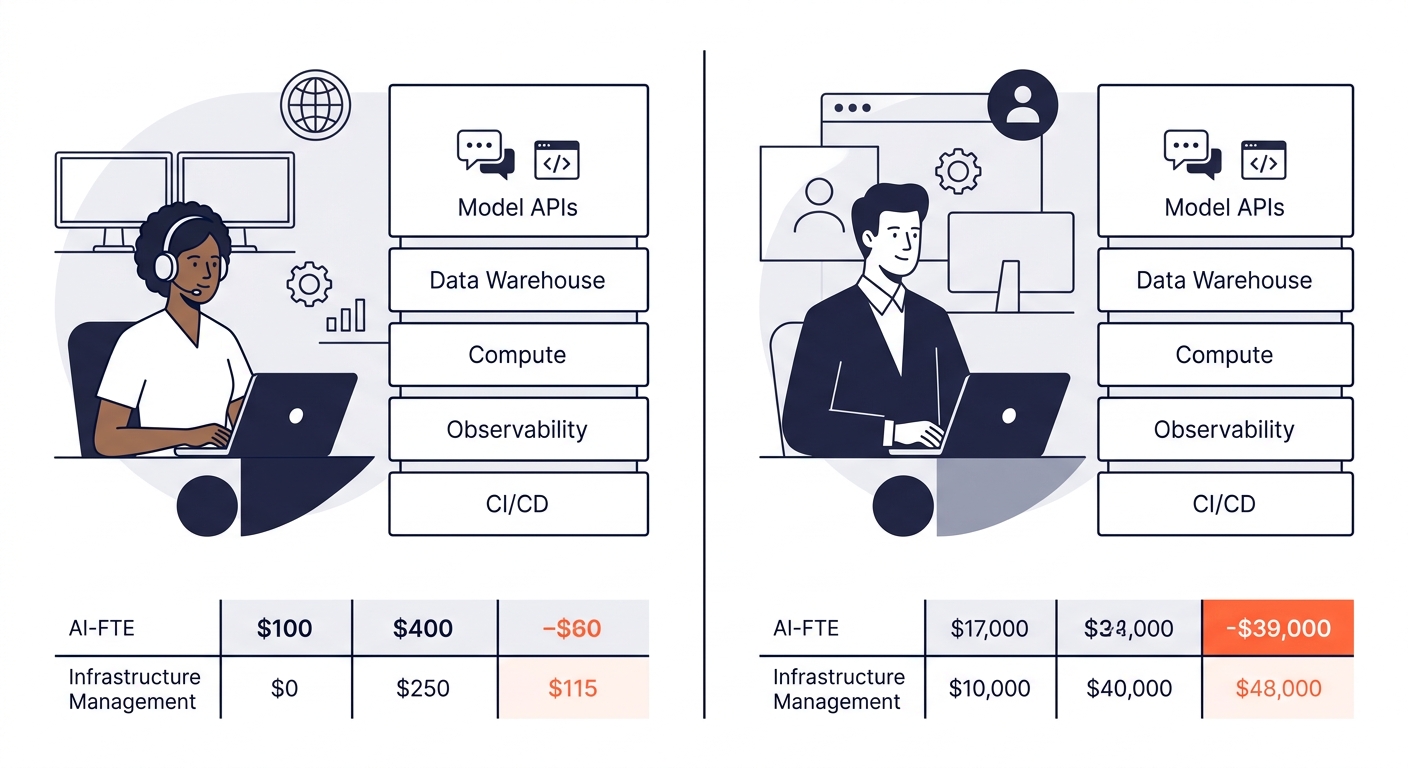

Swan AI’s CEO publicly stated they burned through their entire AI budget in two months, then praised the autonomy of scaling with intelligence rather than headcount. Forbes framed the trend starkly: AI compute costs now surpass human costs at several major enterprises.

For an SMB or mid-market agency outsourcing to the Philippines, this creates a counterintuitive opportunity. Your Philippine team’s fully-loaded cost hasn’t inflated at the same rate as API and compute spending. The labor cost differential between US and Philippine markets, which made outsourcing attractive in the first place, now compounds with a second arbitrage: your offshore team uses AI tools to multiply output while the compute cost stays within tool subscriptions, not your infrastructure budget.

Think about it concretely. A three-person Philippine development team at $7,500/month per developer costs you $22,500/month. Add $500/month in AI tooling subscriptions per developer and you’re at $24,000/month for a team producing output that would require five to six US-based developers before AI augmentation. When communication structures are set up correctly, that team ships production code at a pace that makes the $180K+ per-head US alternative look like a different era of doing business.

The companies writing $175 billion checks on AI infrastructure are subsidizing your outsourcing economics, and they’ll keep doing it as long as the AI arms race continues.

But this subsidy depends on the arms race continuing. If spending plateaus, if the analysts warning about OpenAI and Anthropic dependency risks turn out to be right, the downstream effects could include API price increases, reduced free-tier access, or slower model improvement cycles. Your outsourcing budget allocation in the AI era needs a hedge against that scenario. The hedge is people who understand the tools well enough to switch between them when pricing shifts.

The Spending Escalator and Your Ninety-Day Window

Google has said 2027 capex will significantly increase over 2026. OpenAI is targeting an IPO by late 2026 on the back of $25 billion in annualized revenue. The Pentagon signed AI deals with OpenAI, Google, Microsoft, and Nvidia this week, cutting Anthropic out entirely over disagreements about potential AI misuse. The spending escalator keeps climbing, and the competitive dynamics keep shifting underneath every tool in your stack.

Three things follow from this week’s news for outsourcing operators.

Philippine team AI adoption is table stakes, not a differentiator. If your offshore developers aren’t using AI-assisted coding, AI-powered QA, and model-driven testing in their daily workflows, they’re losing ground to teams that are. The cost of non-adoption is measured in velocity, not dollars.

Your outsourcing partner’s relationship with AI tooling matters as much as their headcount. When you’re evaluating how an agency scales operations, ask what models their teams use, how they handle deprecation cycles, and whether they’ve built any workflows on open-weight alternatives. Those answers tell you whether they’ll absorb or pass through the next API pricing change.

And the window for building an outsourced link building operation, a content marketing pipeline, or a development team that’s AI-native is right now, while Big Tech’s spending war keeps the tools cheap. That war will continue through 2027 based on every signal the companies have given. But the current pricing reflects a land-grab mentality, where platforms are pricing below sustainable levels to capture market share. Enterprises building outsourcing stacks on those prices should plan for a world where compute costs eventually stabilize at higher levels, and the competitive advantage shifts permanently to teams that learned the tools while they were affordable.

The $130 billion quarterly check that Google, Amazon, Microsoft, and Meta wrote this quarter bought a lot of infrastructure. Some of it will power the tools your Philippine team uses tomorrow morning. Whether that keeps working in your favor depends on how deliberately you’ve built your stack to survive the moment when the subsidies end and the real pricing begins.