Google began auto-migrating Dynamic Search Ads campaigns to AI Max in early 2026, giving advertisers a choice: move on your own timeline, or get moved. For one mid-market ecommerce account spending roughly $40,000 per month on Google Ads, that distinction turned out to be worth about six weeks of wrecked performance and $58,000 in wasted spend.

This is a walkthrough of how the paid search migration recovery played out, and what the rebuild looked like once the team stopped trying to fix the new system and started treating it as a fresh account.

The DSA-to-AI-Max Deadline

Google announced the transition path from Dynamic Search Ads to AI Max with enough lead time for teams to review controls, adjust structure, and test performance on their own schedule. The migration documentation from ALM Corp was explicit: forced migrations rarely cause problems at the exact moment of switchover. The bigger risk shows up in the weeks afterward, when teams realize they didn’t fully understand what changed.

This particular account had been running DSAs alongside standard search campaigns for three years. The DSA campaigns caught long-tail queries the keyword campaigns missed, drove about 22% of total conversions, and ran on a Target CPA bid strategy that had been stable for over a year. The account manager, an in-house marketer at a 40-person company, decided to let the auto-migration happen rather than rebuild manually.

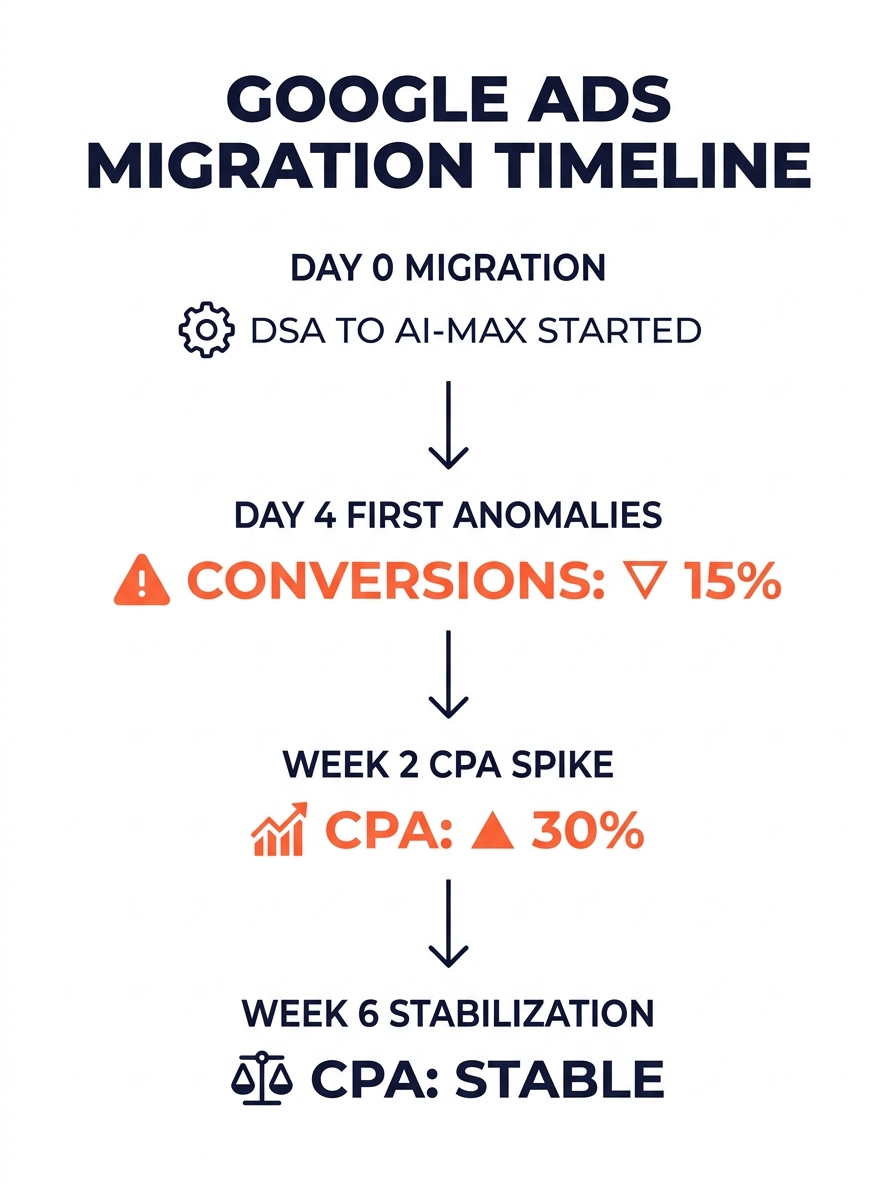

The migration itself went live without errors. Ads served. Impressions looked normal. The trouble started four days later.

Automated Bidding Fed on Contaminated Signals

AI Max uses existing keywords, ad copy, and landing page content as signals for ad targeting. That’s a fundamentally different model than the old DSA crawl-and-match approach. When the migration happened, the bidding algorithm inherited historical conversion data from the DSA campaigns. But the signal landscape had changed. AI Max was now expanding final URLs to pages the DSA campaigns had never touched, and the conversion tracking was attributing actions across a wider set of landing pages.

The result: the Target CPA algorithm thought it was optimizing toward a stable goal, but the underlying data was noisy. Conversion counts looked healthy for the first week because AI Max was matching broader queries and claiming credit for conversions that standard search campaigns had historically driven. The CPA appeared to hold, then started climbing on day eight.

By week two, the blended CPA across the account had risen 34%. The AI Max campaigns were spending faster and converting at a worse rate, while the standard search campaigns, now competing with AI Max for the same queries, saw their impression share drop by 19%.

This failure pattern is well-documented in campaign performance troubleshooting circles: automated bidding is dangerous in accounts with weak segmentation and dirty conversion data. The usual failure is boring. An algorithm optimizes on mixed-intent campaigns, and the numbers erode gradually enough that nobody sounds the alarm for weeks.

Campaigns don’t usually collapse overnight. They underperform just enough to go unnoticed, while continuously eating into your spend.

The $14,000 Week That Forced a Full Pause

Week three was when the numbers became impossible to ignore. The account spent $14,200 in a single week with a CPA 67% above the historical average. The conversion volume looked acceptable in the dashboard, but when the team reviewed actual downstream revenue in their CRM, they found that lead quality had deteriorated sharply. AI Max was driving form fills from queries that had nothing to do with the product’s core use case.

The account manager’s first instinct was to adjust the Target CPA down by 15% and add negative keywords. That’s the standard PPC troubleshooting playbook, and it’s the wrong move during a migration disruption. Tightening a bid strategy while the algorithm is still learning from bad data just restricts volume without fixing the signal quality problem. The campaign entered a “learning limited” state within 48 hours, which further degraded performance.

At this point, the company brought in an outsourced Google Ads migration specialist, a PPC analyst based in the Philippines who’d handled similar transitions for eight other accounts that quarter. If you’ve read our breakdown of how Philippine PPC specialists often outperform in-house teams on ROI, the mechanic is the same here: dedicated focus on one discipline, across enough accounts to pattern-match problems quickly.

The specialist’s first recommendation: pause everything in AI Max, pause automated bidding on standard search, and rebuild.

Rebuilding on Phrase Match and Manual CPC

The PPC campaign rebuild started from a position the account manager found uncomfortable: manual CPC bidding on a stripped-down set of phrase match and exact match keywords. No automated bidding. No broad match. No AI Max.

Here’s why that works after a migration disruption. Google’s automated bidding systems need clean, stable conversion data to function. Establishing baseline analytics before and after any migration is standard practice in SEO, and the same principle applies to paid search. If your conversion data is contaminated by a structural change, whether that’s a domain migration, a platform switch, or a campaign type migration, you need to re-establish the baseline before you can trust automation again.

The rebuild followed this sequence:

- Audit all conversion actions. The specialist found three redundant conversion events firing on the same thank-you page, inflating reported conversions by roughly 40%. This had been true before the migration too, but automated bidding had compensated for it over time. The migration reset that compensation.

- Rebuild campaigns around 120 high-intent keywords. The previous account had over 800 keywords across DSA and standard campaigns. The new structure started with the 120 queries that had driven 80% of actual closed revenue over the prior six months.

- Set manual CPC bids at 70% of the historical average CPC. Conservative bidding let the team collect clean performance data without overspending during the learning phase.

- Run for 14 days without changes. This is the hardest part. The instinct to adjust bids daily is strong, but frequent changes during a migration period disrupt learning and prevent you from getting reliable data. The specialist set a rule: no bid adjustments for two full weeks.

The team also used this window to fix landing page alignment. Several of the top-spending keywords were sending traffic to category pages instead of product-specific pages, which inflated bounce rates and depressed conversion rates. A Philippine virtual assistant handled the QA process, checking every destination URL against the keyword intent and flagging mismatches, which freed the PPC specialist to focus on the account structure.

Warning: If you’re troubleshooting a failed PPC migration, resist the urge to make daily bid adjustments. Every change restarts the learning window. Fourteen days of zero changes sounds like an eternity when your CPA is elevated, but it’s the fastest path to data you can actually trust.

Ninety Days After the Reset: CPA Below Where It Started

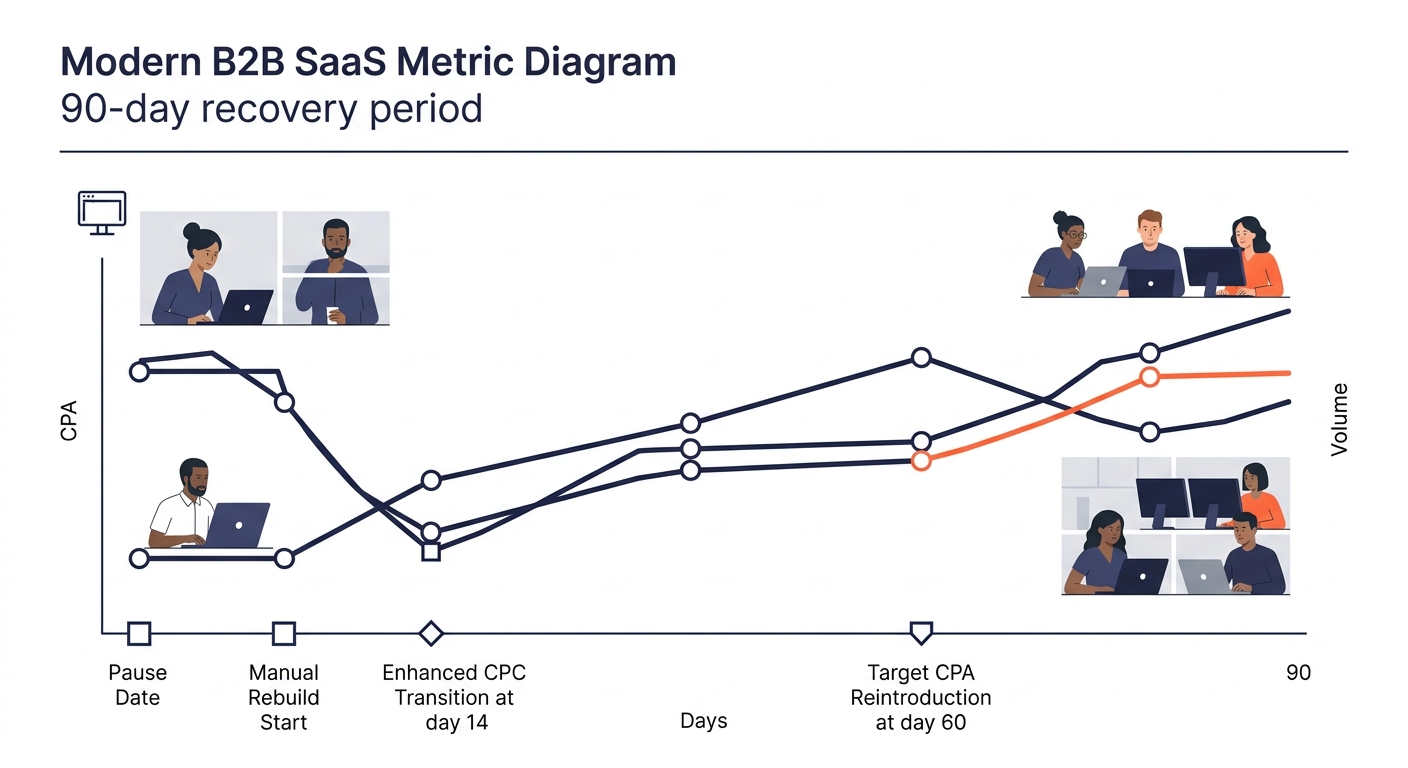

By day 14 of the manual rebuild, the account had collected enough clean conversion data to calculate a reliable CPA for each keyword cluster. The specialist transitioned the top 40 keywords to Enhanced CPC, keeping the remaining 80 on manual bids.

By day 30, conversions were back to 85% of pre-migration volume. CPA was 12% above the old baseline, but lead quality had improved measurably. Downstream close rates were actually higher than the pre-migration period because the junk queries were gone.

By day 60, the specialist introduced Target CPA bidding on the top-performing campaigns, using the clean 30-day conversion history as the foundation. This time, the algorithm had real data to work with. CPA dropped below the pre-migration average within two weeks of the switch.

At the 90-day mark, the account was spending $38,000 per month (slightly below the original $40,000), generating 18% more qualified leads, and running at a CPA 9% lower than the pre-migration baseline. The PPC campaign rebuild ROI was clear in the numbers: the $58,000 in wasted spend during weeks one through six was painful, but the rebuilt account was structurally healthier than what it replaced.

The account has not re-enabled AI Max. The specialist’s position is to wait until Google provides better controls for final URL expansion and query-level reporting before testing it again. That’s a defensible call. The same logic applies to any outsourced Google Ads migration: you don’t adopt a new campaign type until you can verify what it’s doing with your budget. Treating it as a calculated ROI decision rather than a checkbox exercise is what separates accounts that recover quickly from ones that bleed spend for months.

The broader pattern from this migration isn’t about AI Max specifically. Any structural change to a paid search account can poison the data that automated systems depend on. Domain migrations, platform switches, campaign type changes, even major landing page redesigns all carry the same risk. When that happens, the fastest path back to stable performance runs through a deliberate downshift: manual bids, tight keyword sets, clean conversion tracking, and two weeks of patience before anyone touches anything. Recovery takes about 90 days. Trying to fix a broken automation layer by tweaking settings from within the broken system usually extends that timeline to six months, and the common operational mistakes that erode outsourcing ROI apply here too. Rushing the process, skipping the data audit, and changing too many variables at once will cost you more than the original disruption did.