Marpipe’s internal benchmarks show that multivariate ad testing can produce “clear, clean learnings that impact strategy and revenue” with budgets as low as $1,000. A thousand dollars and a week of focused work. The barrier to running more tests has never been budget. It’s been staffing bandwidth. And that gap between what you could test and what you actually test is where most digital marketing strategies quietly stall.

According to testing from over 200 U.S. teams surveyed by Remote Growth Partners, the highest ROI from global hiring shows up when companies reinvest payroll savings into more ad testing, more landing page variants, and faster iteration cycles. The pattern is consistent: teams that test more, win more. The constraint isn’t strategic vision. The constraint is how many hands you have building and measuring experiments.

This article breaks down the numbers behind that constraint and explains why campaign speed testing outsourcing has become the operational advantage separating fast-moving agencies from everyone else.

Why the Testing Gap Keeps Growing

A senior paid media specialist in the U.S. costs $75,000 to $95,000 per year, fully loaded. That person handles strategy, client communication, reporting, and (when there’s time left over) actual campaign experimentation. On a good week, they might launch two or three ad variants for a single account. On a busy week, they launch zero.

Meanwhile, each ad platform’s algorithm rewards volume. Meta, Google, and TikTok all perform better when you feed them more creative variants, more audience segments, more landing page combinations. The platforms are designed for rapid marketing iteration, but most in-house teams are staffed for maintenance.

The math is straightforward. If your team runs five tests per month and a competitor runs twenty, the competitor accumulates winning data four times faster. Over a quarter, they’ve identified top-performing creative, dialed in their audiences, and trimmed waste from their spend. Your team is still working through round one.

This is the core problem with a fast-moving market strategy built on a slow-moving team. The strategy document might be brilliant. The execution cadence makes it irrelevant.

Agile Sprints With an Offshore Marketing Team

Google’s marketing team has publicly advocated for agile approaches to marketing, emphasizing “smaller, frequent outputs that can be tested and optimized continuously” over big-bang campaigns. Adobe’s breakdown of agile marketing frameworks echoes the same point: agile thrives on flexibility and rapid iteration, unlike waterfall structures that rely on rigid, top-down planning.

The problem with applying agile to a three-person marketing department is arithmetic. Sprints require someone to plan the test, someone to build the assets, someone to QA and launch, and someone to pull data and analyze results. In a small team, that “someone” is the same person four times over. Sprint velocity collapses before it starts.

Offshore teams change the ratio. When you add two or three execution specialists at $10 to $15 per hour, your U.S.-based strategist can design ten experiments per sprint instead of three. The offshore team builds the email sequences, sets up the A/B tests, creates the ad variants, configures the tracking, and compiles the performance reports. Your stateside lead reads the results and decides what to scale.

This is how outsourced PPC management works in practice for growing agencies. The strategic layer stays local. The build-and-measure layer scales offshore. And the sprint cadence accelerates from monthly to weekly.

What a Two-Week Sprint Looks Like

Here’s a realistic sprint for a mid-market e-commerce brand working with a four-person offshore marketing pod:

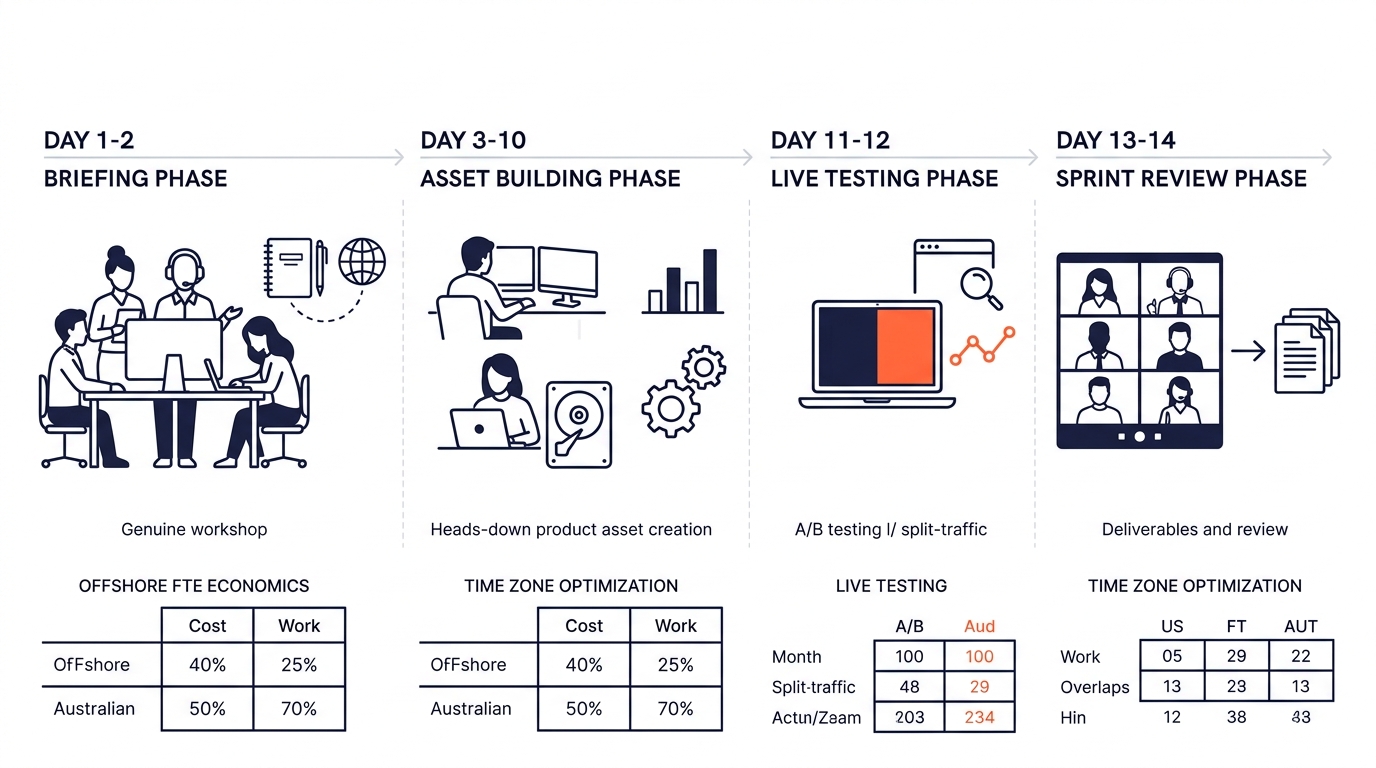

- Days 1–2: Strategist briefs the team on three test hypotheses (new headline angles, audience segments, landing page layouts)

- Days 3–7: Offshore team builds all variants, sets up tracking pixels, creates UTM structures, and stages campaigns in the ad platform

- Days 8–12: Campaigns run live; offshore analysts pull daily performance snapshots

- Days 13–14: Sprint review. The team kills underperformers, doubles budget on winners, and documents learnings for the next sprint

That’s four complete test cycles per month. Most in-house teams struggle to complete one.

The Economics of Rapid Iteration

Philippine marketing specialists with hands-on experience in platforms like HubSpot, Mailchimp, Klaviyo, Google Ads, and Meta Business Suite typically cost between $8 and $18 per hour depending on seniority. Philippine agencies ranked on Clutch.co’s April 2026 digital marketing listings include firms like Pronto Marketing, where 100% of reviewed clients specifically commend flexibility, responsiveness, and on-time delivery.

Let’s put real numbers on the testing advantage:

U.S.-only team (3 FTEs):

- Annual payroll: ~$270,000

- Monthly test output: 5–8 experiments

- Cost per experiment: ~$2,800–$4,500

Hybrid team (1 U.S. strategist + 3 Philippine specialists):

- Annual payroll: ~$155,000

- Monthly test output: 16–24 experiments

- Cost per experiment: ~$540–$810

That’s a 4x to 5x reduction in cost per experiment. But the real payoff isn’t the savings. It’s the compound effect of learning faster. After six months, the hybrid team has run roughly 120 experiments compared to the U.S.-only team’s 36. The hybrid team knows which subject lines convert, which ad hooks drive clicks, which landing page layouts reduce bounce rates, and which audience segments are worth scaling.

The real payoff isn’t cheaper experiments. It’s the compound effect of running 120 tests in the time a U.S.-only team runs 36.

If you’re already exploring how to outsource digital marketing to the Philippines, the speed testing angle gives you a concrete framework for measuring whether the arrangement is working. Don’t track hours logged. Track experiments completed per sprint.

Where Philippine Teams Fit the Workflow

Digital marketing agility offshore depends on three operational factors: platform literacy, time-zone overlap, and communication rhythm.

Platform literacy is the easiest box to check. The Philippine BPO sector has produced tens of thousands of specialists trained on the same martech stack U.S. agencies use daily. Email marketing offshore teams build campaigns, segment lists, write copy, and analyze performance across HubSpot, Mailchimp, and Klaviyo. CRO specialists run A/B tests, analyze user behavior, and recommend site improvements. These aren’t junior associates learning on the job. They’re execution professionals who’ve been doing this work for years.

Time-zone overlap is where the Philippines has a structural advantage over other offshore destinations for U.S. and Australian clients. Manila is UTC+8, which means a Philippine team’s morning (8 AM local) hits 8 PM Eastern. A strategist in New York can brief the team at end of day, and the Philippine team builds overnight. By morning EST, the work is staged for review. This creates a near-continuous production cycle without anyone working overtime.

Communication rhythm matters more than people assume. Teams that understand why cross-cultural training reduces conflict tend to set up structured async check-ins rather than defaulting to endless Slack pings. A 30-minute daily standup at the overlap window (usually 8–9 PM Eastern / 8–9 AM Manila) keeps everyone aligned without draining anyone’s schedule.

And when the testing cadence involves content assets, having a dedicated content team producing blog posts, ad copy, email sequences, and social captions means your strategist never waits on creative. The bottleneck shifts from “who’s going to build this?” to “which test should we prioritize?”

For agencies scaling multiple client accounts simultaneously, the approach mirrors what growing advertising agencies use when building offshore teams: start with a small pod, prove the sprint cadence, then replicate the model across accounts.

What the Numbers Still Can’t Answer

The economics are clear. Offshore teams enable more testing at lower cost. The agile framework is proven. The Philippine talent pool is deep and platform-literate. But there are real questions the data doesn’t resolve yet.

How long does the ramp-up take? Filipino agencies like those on Clutch.co report fast onboarding, but every team, product, and brand voice has its own learning curve. The first sprint is almost always slower than projected. Two to three sprints in, you get a realistic baseline for velocity.

Does more testing always produce better outcomes? There’s a point of diminishing returns where you’re testing marginal differences (button color, comma placement) instead of meaningful hypotheses. Speed without strategic direction produces noise, not signal. The U.S.-side strategist’s judgment about what to test matters as much as the offshore team’s ability to run the test.

What happens when you scale past one pod? Managing one offshore team of three people is relatively simple. Managing four pods across six client accounts introduces coordination overhead that can eat into the speed advantage. The role of account managers in customizing outsourcing arrangements becomes critical at that stage.

These are the questions worth tracking as you build your own data. The teams that document their sprint velocity, cost per experiment, and win rate over time will be the ones who can answer them with precision instead of anecdote. And those numbers, accumulated over dozens of sprints, become a competitive asset that’s genuinely hard for slower-moving competitors to replicate.