Clutch.co’s April 2026 rankings list over 60 AI-focused development firms in the Philippines, including companies like BlastAsia, EACOMM, and TechnoYuga that build custom machine learning pipelines and AI-driven product architectures. That concentration of AI-capable shops in a single outsourcing market tells you something the cheap-labor narrative completely misses. Philippine development teams have built genuine web development specialization around AI-assisted architecture, and US agencies trying to replicate that capability internally keep running into walls they didn’t anticipate: hiring timelines that stretch six months, senior engineers who cost $15,000+ per month fully loaded, and a tooling landscape that changes faster than any single in-house team can track.

These six rules cover how to actually work with Philippine teams on AI-powered web development outsourcing, drawn from the operational patterns that separate productive engagements from expensive disappointments.

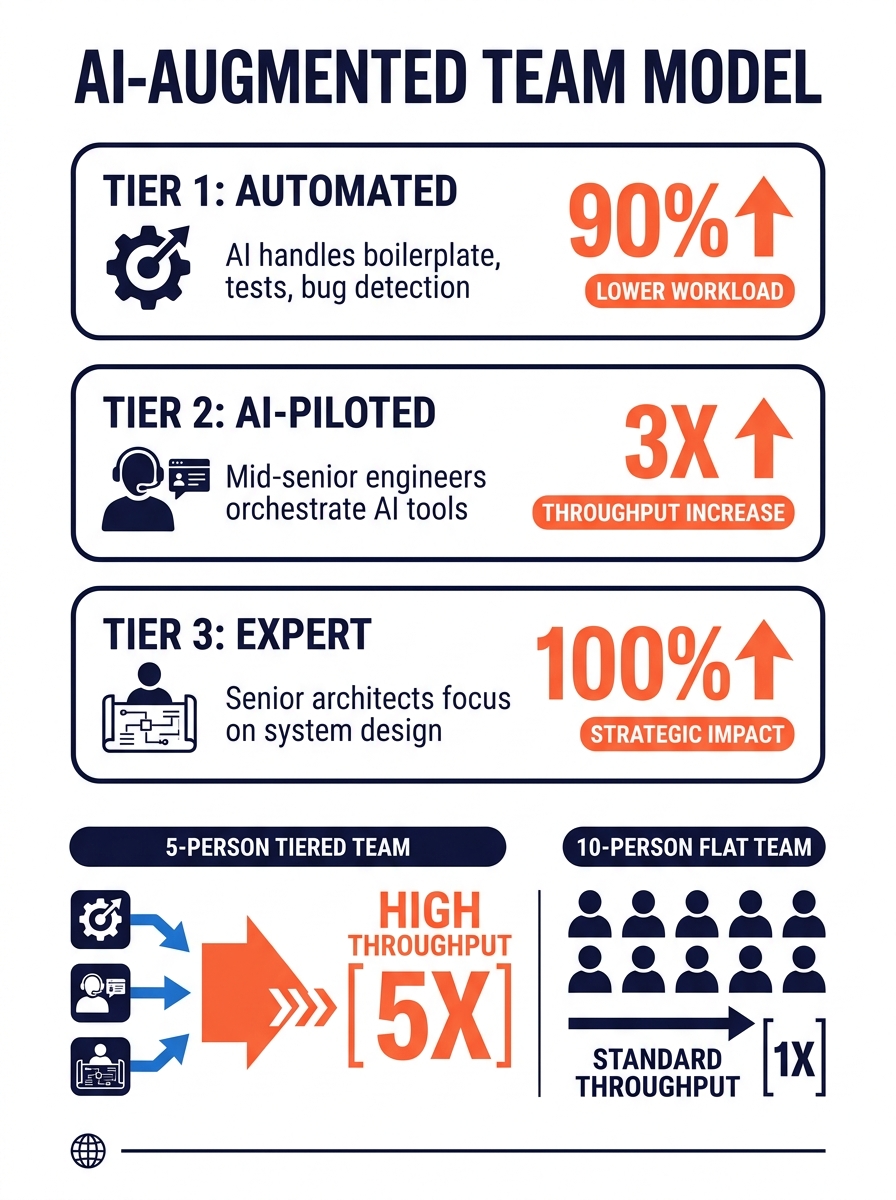

Structure your offshore team in tiers, not flat headcount

The most effective Philippine AI teams now operate on a three-tier model that redefines what “team size” means. At the first tier, AI handles boilerplate code generation, unit tests, and basic bug detection. Human review at this level is limited to spot-checking roughly 5% of output, which eliminates a huge chunk of traditional junior developer work. At the second tier, mid-to-senior engineers act as AI pilots, orchestrating tools for integration, debugging, and architectural decisions. They validate AI-generated code and escalate the genuinely hard problems. At the third tier, senior architects focus exclusively on system design, API strategy, and domain-specific logic.

The practical result: a five-person Philippine team structured this way produces the throughput of an eight-to-ten-person team operating under a traditional flat model. Release velocity doubles or triples, and production incidents drop by 40–60%.

If you’re used to thinking about outsourced web development in terms of headcount, this model forces a useful reframing. You’re buying throughput capacity, not seats. When you hire six developers and two of them spend 70% of their time on work that AI now handles at tier one, you’re paying for labor that’s already been automated away.

This rule applies when you’re building anything that requires scalable architecture Philippines teams can iterate on over months: SaaS platforms, internal tools, client-facing web apps with real complexity. It breaks down for short, well-scoped projects where a single senior developer and an AI coding assistant can handle everything without the overhead of tier coordination.

Budget for AI tooling as a line item, not an afterthought

AI tools add $200–400 per developer per month to your total cost of ownership. That covers GitHub Copilot licenses, GPT or Claude API usage, CI/CD integrations, and observability platforms. It sounds small until you multiply it across a team of eight for twelve months, and then you’re looking at $19,000–38,000 annually in tooling alone.

Philippine teams still maintain a significant cost advantage even with these line items included. A senior developer in the Philippines runs $6,400–8,900 per month fully loaded (base salary, statutory benefits at 22–23%, AI tooling, management overhead). The US equivalent for comparable output sits at $15,600–19,500. That’s a 40–60% gap. But agencies who forget to budget for the tooling layer end up surprised by invoices or, worse, end up with teams that skip the AI-assisted workflow entirely because nobody is paying for the licenses.

As a 2026 pricing guide from Appinventiv points out, the decision about location and team structure often impacts total AI development cost more than the technical architecture itself. Where you build matters as much as what you build.

This rule applies universally. Even if your project doesn’t involve training custom models, the productivity gains from AI-assisted coding, testing, and documentation make tooling investment pay for itself within the first sprint cycle. There’s no scenario where going without it makes financial sense.

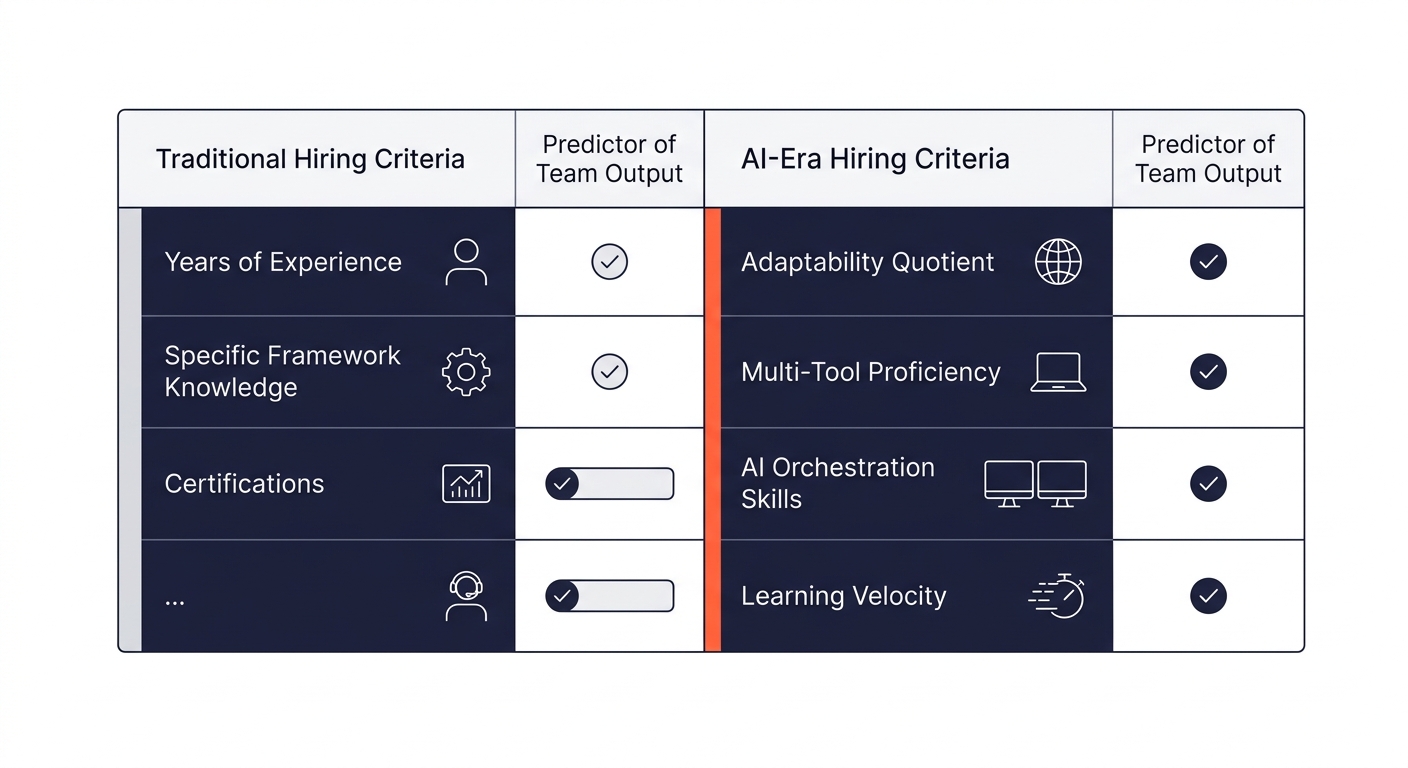

Hire for adaptability over years of experience

Philippine developers increasingly score well on what the industry calls Adaptability Quotient (AQ), a measure of how quickly engineers learn, validate, and adapt to evolving AI tools. This matters because the tooling landscape shifts every quarter. A team that mastered one code generation model six months ago needs to evaluate and potentially switch to a better option today. Teams that are methodology-focused rather than tool-dependent handle these transitions without losing velocity.

When advertising agencies use offshore teams to scale operations, the ones that succeed are usually hiring for this flexibility rather than checking boxes on specific framework experience. An engineer who has spent three years on a single stack but can’t adapt to AI-assisted workflows will produce less than an engineer with 18 months of experience who’s already built with multiple AI coding tools.

This rule bends for highly specialized domains. If you need someone with deep expertise in a specific database architecture or a regulated industry’s compliance stack, experience in that exact domain still matters more than general adaptability. But for most web application work, AQ is the better predictor of team performance.

Feed your AI tools architectural context, not isolated prompts

Here’s where offshore AI integration separates competent teams from exceptional ones. AI coding assistants generate better output when they understand the system they’re working within. Philippine teams that have moved past the “use Copilot for autocomplete” phase are now feeding runtime data, dependency graphs, and architectural documentation directly into their AI prompts. The result is that AI generates code consistent with the existing system’s patterns, not generic solutions that create drift.

As software architect Heemeng Foo wrote in a detailed analysis of AI-assisted architecture: “AI collapses feedback loops. When you can scaffold an API, generate tests, and wire monitoring in minutes, architectural decisions surface immediately — especially the bad ones.” When AI works fast but without context, you get architecturally inconsistent code at unprecedented speed. When AI works fast with context, you get a genuine co-architect.

This rule applies strongly during legacy modernization work, where architectural drift, tight coupling, and undocumented tribal knowledge create the worst possible conditions for context-free AI generation. It applies less strictly for greenfield projects where there’s no existing architecture to respect, though even there, feeding AI your design documents and API contracts produces noticeably better output.

When AI works fast but without context, you get architecturally inconsistent code at unprecedented speed. When AI works fast with context, you get a genuine co-architect.

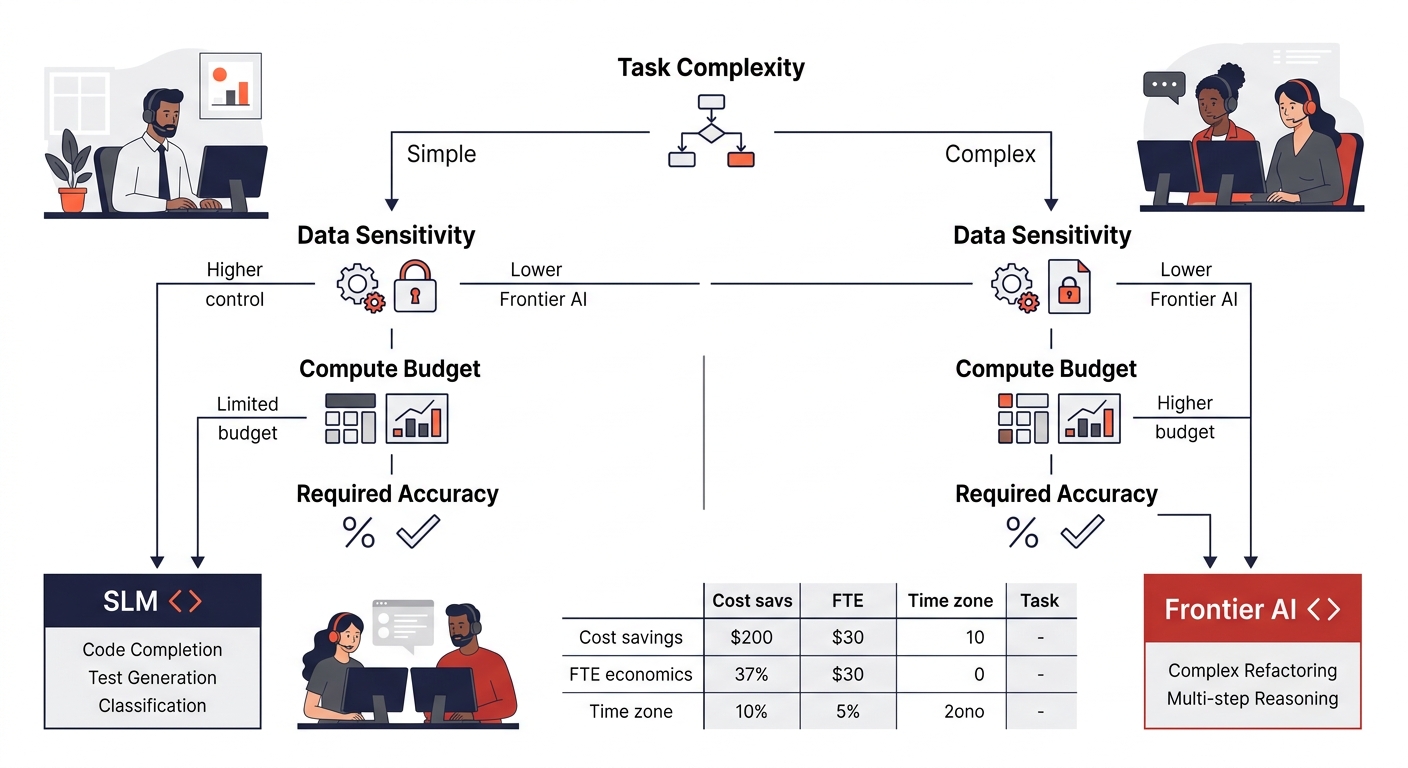

Test smaller models before defaulting to frontier APIs

US agencies tend to default to frontier models like GPT-4 or Claude for everything. Philippine development teams, working under tighter per-project budgets, have gotten pragmatic about using small language models (SLMs) for specific tasks: code completion, document classification, internal search, test generation. These smaller models deliver comparable results for bounded tasks at a fraction of the compute cost, and they run on-premise for clients with data sensitivity requirements.

Google’s April 2026 release of Gemma 4, an open model licensed under Apache 2.0 and optimized for agentic workflows, has given Philippine teams another tool for building custom, fine-tunable AI agents without depending on expensive API calls. For agencies building client projects where per-request API costs eat into margins, this approach keeps AI-powered web development outsourcing economically viable even at scale.

This rule breaks when your specific task genuinely requires frontier-model reasoning capability. Complex multi-step code refactoring, nuanced natural language understanding for user-facing features, and cross-system architectural analysis still benefit from the most capable models available. The skill is knowing which tasks need the expensive model and which don’t.

Align review cycles with time zones, not standups

The Philippines operates in GMT+8, which gives US teams on Eastern time a brief morning overlap and US teams on Pacific time an evening overlap. Trying to force synchronous standup meetings into these windows wastes the most productive hours on both sides. Better-run engagements use asynchronous review cycles: Philippine teams push completed work and architectural decision logs at their end of day, US-side leads review and comment during their morning, and the Philippine team picks up feedback at the start of their next shift.

This cadence works especially well for the tiered model described earlier, because tier-three architectural decisions can be reviewed asynchronously while tier-one and tier-two work continues in parallel. Teams experienced in cross-cultural collaboration tend to adopt this pattern faster because they’ve already built communication norms that don’t depend on everyone being online simultaneously.

The exception is during active incident response or time-sensitive launches, where synchronous communication becomes worth the schedule disruption. Build those windows into your engagement agreement explicitly, so they’re the exception rather than an exhausting daily norm.

When These Rules Break Down

These rules assume you’re building or maintaining a product with real architectural complexity: a SaaS platform, a client-facing web application with multiple integrations, an internal tool that needs to scale. If your project is a dedicated WordPress team building marketing sites from established templates, the three-tier model adds overhead that a skilled two-person team with AI tools doesn’t need.

They also assume your agency has someone, whether internal or contracted, who can evaluate architectural decisions. Philippine teams handle execution and increasingly handle design, but the business context for what to build and why still needs to come from your side. SCIMUS research found that AI tools increase development speed by 30–40% and reduce operating costs by similar margins, but those gains evaporate when there’s no clear direction for the team to accelerate toward.

The Philippine development market’s strength in scalable architecture Philippines agencies need is real, measurable, and growing. The question for US agencies isn’t whether these capabilities exist offshore. The question is whether your current engagement model is structured to take advantage of them, or whether you’re still buying headcount and hoping for the best.